- #PYTHON JUPYTER NOTEBOOK STANDALONE HOW TO#

- #PYTHON JUPYTER NOTEBOOK STANDALONE CODE#

- #PYTHON JUPYTER NOTEBOOK STANDALONE FREE#

#PYTHON JUPYTER NOTEBOOK STANDALONE FREE#

Because it is a free account, you will be limited to 4GB RAM and a 1 Core CPU. Then you will be asked to set some configurations, such as the amount of RAM and cores.Give it a name and description and click CREATE. Here, click on the green button on the top right corner to start a new project. You will then be prompted to a Projects page.Sign-up for the service to create an account.To get started with a Jupyter notebook environment in MatrixDS: The platform provides GPU support as needed so that memory-heavy and compute-heavy tasks can be accomplished when a local machine is not sufficient. The paid tier is similar to what is offered on the major cloud platforms whereby you can pay by usage or time. They offer both free and paid tiers as well. They support some of the most used technologies such as R, Python, Shiny, MongoDB, NGINX, Julia, MySQL, and PostgreSQL. MatrixDS is a cloud platform that provides a social network experience combined with GitHub that is tailored for sharing your data science projects with peers. Many data scientists do not have the necessary hardware for conducting large scale deep learning, but with cloud hosted environments, the hardware and backend configurations are mostly taken care of which leaves the user to only configure their desired parameters. Many cloud providers, and other third-party services, see the value of a Jupyter notebook environment, which is why many companies now offer cloud-hosted notebooks that are hosted on the cloud and accessible to millions of people. Regular IDE’s do not stop compilation even if an error is detected and, depending on the amount of code, it can be a waste of time to go back and manually detect where the error is located.

#PYTHON JUPYTER NOTEBOOK STANDALONE CODE#

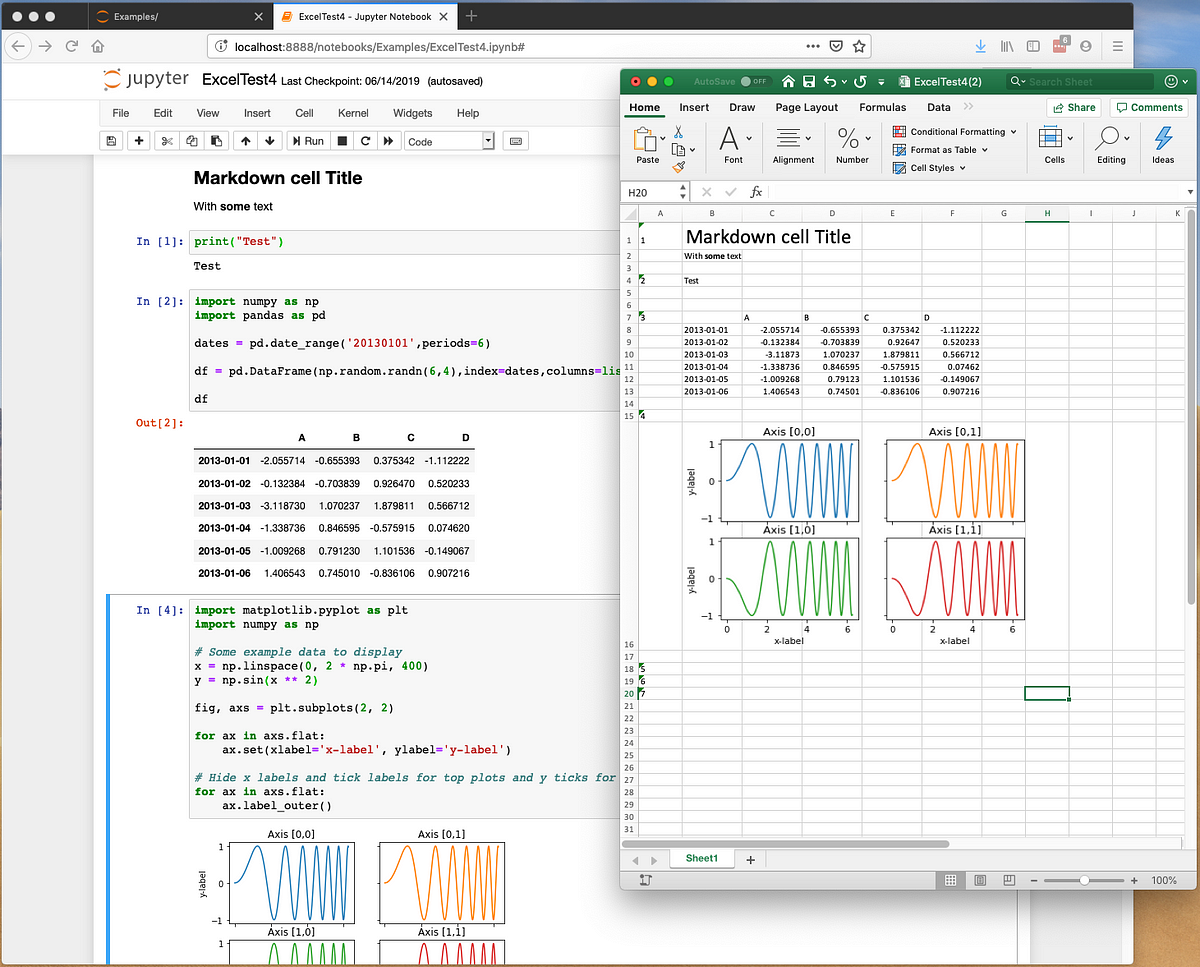

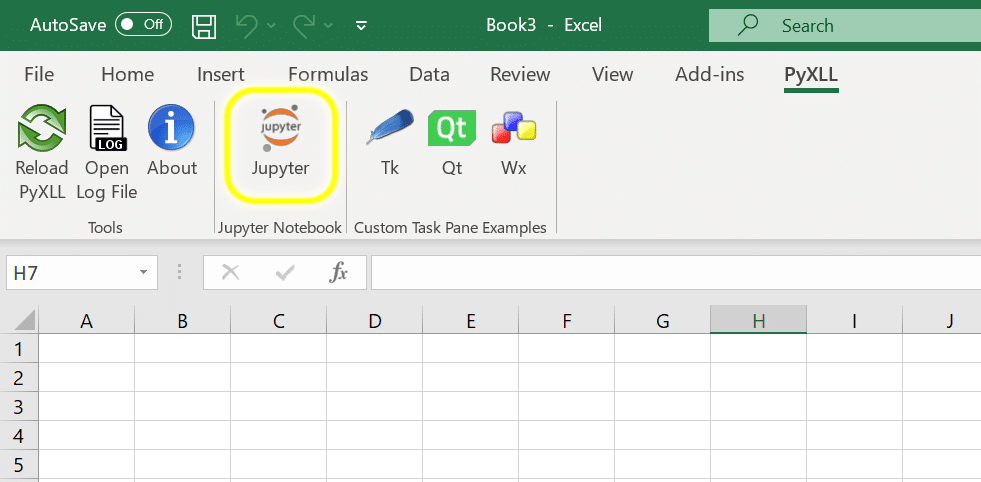

One of the best features although simple is that the notebook would stop compiling your code if it spots an error. The notebook environment allows us to keep track of errors and maintain clean code. Jupyter notebook environments are now becoming the first destination in the journey to productizing your data science project. The Increasing Popularity of Jupyter Notebook Environments One of the main differences can be multi-language support and version control options that allow data scientists to share their work in one place. Other players have now begun to offer cloud-hosted Jupyter environments, with similar storage, compute and pricing structures.

Many cloud providers offer machine learning and deep learning services in the form of Jupyter notebooks. We no longer recommend this approach, and in fact, use of the "IPython Clusters" tab no longer works in notebooks run via Open OnDemand on Savio.Notebooks are becoming the standard for prototyping and analysis for data scientists. In the past, we guided users to make use of IPython Clusters to allow one to use parallelization in a Jupyter Python notebook. Alternatively, you might run your code non-interactively outside of a notebook, as discussed here. If you'd like to run workers in parallel across multiple nodes, this may be possible and feel free to contact us to discuss further. To run code in parallel across cores on one node, you can start up with workers and run your parallel code all within your notebook, as described here. (You shouldn't use the standalone Open OnDemand server as that only provides a single compute core.)

#PYTHON JUPYTER NOTEBOOK STANDALONE HOW TO#

This document shows how to use the ipyparallel package to run code in parallel within a Jupyter Python notebook.įirst, start a Jupyter server via Open OnDemand using the "Jupyter Server - compute via Slurm using Savio partitions" app.

Parallelization in Jupyter Notebooks Using ipyparallel ¶ Resources for working with sensitive data Securing your computing and storage systems Tips on Providing an Informative Help Request Using Jupyter Notebooks, RStudio, and Other Open OnDemand Apps Running MATLAB jobs across multiple nodes Transferring Data Between Savio and Box or bDrive Using SFTP with the BRC Supercluster via Filezilla Using Globus Connect with the BRC Supercluster Making files accessible to group members and other Savio users